Project leads: Maggie Engler, Lead Data Scientist, and Lucas Wright, Senior Researcher, at the Global Disinformation Index (GDI)

Data scientists: Noah Benson and Vaughn Iverson

DSSG fellows: George Hope Chidziwisano, Richa Gupta, Kseniya Husak, Maya Luetke

Participant bios available here.

Project Summary: Online disinformation has been used as a tool to weaponize mass influence and disseminate propaganda. To combat disinformation, we need to understand efforts to disinform – both upstream (where disinformation starts) and downstream (where and how it spreads). For the nonprofit organization Global Disinformation Index (GDI, https://disinformationindex.com), financial motivation is a connecting point that links together the upstream and downstream components of disinformation. To reduce disinformation, we need to disrupt its funding. The ad tech industry has inadvertently thrown a funding line to disinformation domains through online advertising. Until now, there has been no way for advertisers to know the disinformation risk of the domains carrying their ads, as programmatic advertising means that advertisers might show ads all over the web without making a conscious decision about each location.

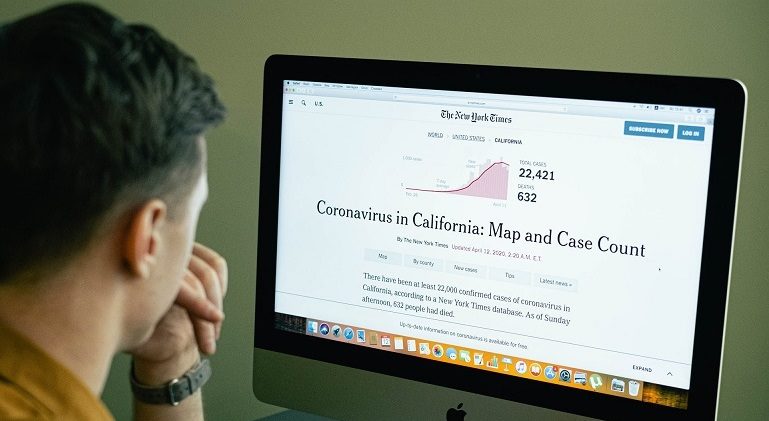

Additionally, the rise of the coronavirus crisis has further strained the online publishing industry. Controversy surrounding the coverage of the pandemic has spooked ad buyers into blocking websites using excessively broad keywords. In April, “coronavirus” overtook “Trump” as the keyword blocked by the most brands, and since news coverage has almost entirely focused on the pandemic for months, trustworthy media outlets are being starved of revenue as a result.

GDI’s solution enables ad providers to detect the presence of disinformation in the form of adversarial narratives automatically, and is becoming increasingly adopted across the ad tech ecosystem. GDI researchers with expertise in disinformation have identified a list of adversarial narratives, such as that vaccines cause autism and that coronavirus was created as a bioweapon. We have built a series of classifiers to enable the detection of articles that discuss these narratives; automated collection of metadata from tens of thousands of news websites; and manual reviews by media experts of the content, context and operations of a subset of these sites.

In this project, we will use this data to construct open source topic models that classify news articles according to their risk of containing disinformation about the coronavirus in real time. Much of the ad tech industry has already expressed a desire to integrate such models into their brand safety services, so there is a clear path to implementation. This will ensure that reliable information about the virus receives ample funding and the publication of harmful disinformation is disincentivized.

Learn more on the project website and project blog.